|

8. THE HALPERN THINKING ASSESSMENT TASKS |

|

|

| 8.1

INTRODUCTION |

In

this section the tasks in the assessments which seem to have the greatest

potential for assessing the four different kinds of thinking are discussed.

As stated in section 1.4 of this report, the researcher shares the

view of Halpern (2003, page 361) that the type of test format most

suited to the assessment of thinking uses: |

| |

| • |

an open-ended response format

|

| • |

specific questions that probe the reasoning behind an answer

|

| |

|

|

|

|

There

are many tasks in the NEMP assessments which clearly involve one,

or more, of the categories of thinking in which we are interested,

but which only examine the results of that thinking and not the processes

through which the student went to achieve those results. That is,

they fail to satisfy the second, of Halpern’s criteria.

The only task format which is likely to satisfy both of the criteria

is the one-to-one interview format in which the student works individually

with a teacher with the whole session recorded on videotape. The team

and independent also involve some videotaping, but there is not the

same opportunity for probing the student’s reasoning in these

formats.

Consequently, the only NEMP tasks which seem to satisfy Halpern’s

criteria are those which: |

| |

|

• |

are in a one-to-one interview format |

|

• |

are open-ended |

|

• |

ask for explanations or justifications |

| |

|

For

want of a better word such tasks will be referred to as Halpern tasks.

Whether or not the potential of these tasks was realised in the marking

and reporting of the tasks is also considered. |

| |

| 8.2

THE DISTRIBUTION OF HALPERN THINKING TASKS |

| The

table below indicates: |

| |

|

| • |

the subject area |

| • |

the number of tasks judged to involve each of the forms of thinking |

| • |

the number of Halpern thinking tasks for each form of thinking |

| • |

the total number of thinking tasks and Halpern thinking tasks. |

| • |

the number of Halpern thinking tasks in which the potential was realised

in the marking and reporting criteria |

| |

| |

| |

Number

of thinking tasks |

Number

of Halpern tasks |

| Subject

|

Critical

|

Creative

|

Reflective |

Logical |

Critical |

Creative |

Reflective |

Logical |

| Science

|

2 |

1 |

5 |

14 |

2 |

0 |

1 |

1 |

| Art

|

5 |

10 |

4 |

0 |

5 |

0 |

4 |

0 |

| GTM

|

1 |

0 |

2 |

3 |

0 |

0 |

0 |

0 |

| Music

|

0 |

8 |

1 |

0 |

0 |

0 |

1 |

0 |

| Tech

|

5 |

1 |

3 |

6 |

2 |

0 |

0 |

2 |

| Read/Speak

|

0 |

6 |

6 |

2 |

0 |

0 |

0 |

0 |

| Info

Skills |

0 |

0 |

6 |

2 |

0 |

0 |

0 |

1 |

| Soc

Studies |

0 |

0 |

6 |

1 |

0 |

0 |

0 |

0 |

| Maths |

0 |

0 |

0 |

10 |

0 |

0 |

0 |

0 |

| Listen/View

|

5 |

1 |

4 |

3 |

5 |

0 |

2 |

0 |

| Health/PE

|

1 |

0 |

14 |

0 |

0 |

0 |

3 |

0 |

| Writing

|

2 |

13 |

4 |

2 |

0 |

0 |

0 |

0 |

| Total |

21 |

40 |

55 |

43 |

14 |

0 |

11 |

4 |

| No

of Halpern tasks realising potential |

11 |

0 |

10 |

1 |

|

| |

| 8.3

DIFFERENCES RELATING TO THINKING CLASSIFICATION |

The

most obvious feature of this table is that although there were a good

number of tasks involving creative and logical thinking in the assessments,

none of the creative tasks and few of the logical tasks satisfied

the Halpern thinking task criteria.

In the creative tasks, only 5 of the 40 tasks used a one-to-one format

and the students were not asked to explain or justify their responses

in any of these. This is not surprising. From an assessment point

of view, it seems reasonable to assume that the creativity of an art

work, a piece of music, or a story can be judged by looking at the

end result. It would also be impractical and unnecessary to have a

teacher observing all the time an art work was being made or a story

written. However, in the art assessments, for example, there are some

excellent examples of students being asked to think critically and

reflectively on the work of others and it does seem that it would

be worthwhile to ask them to consider their own creativity in the

same way. Perhaps this is not practicable in the NEMP context, but

it should certainly be encouraged in the classroom.

The situation is a little different in the logical tasks. 13 of the

43 thinking tasks used the one-to-one format and there is no obvious

practical reason why this number could not have been greater. There

was some probing of reasoning, but the researcher felt that it was

relatively superficial and this is reflected in the fact that only

one of the Halpern logical tasks realised its potential. There is,

it seems, an unwarranted tendency to assume that if a student achieves

the correct answer for a question involving logical thinking then

the thinking must have been sound. There is also the fact that the

logical thinking tasks tend to be less open-ended than those involving

the other kinds of thinking.

In contrast, 16 of the 21 critical thinking tasks used the one-to-one

format and many of them made the most of the opportunities for open-ended

questions and response probing which this format provides. There is

little doubt that this is the area in which the NEMP assessments were

most successful in assessing the thinking of students.

In the reflective thinking tasks 26 of the 55 tasks used the one-to-one

format and the majority of these were open-ended. However, in a significant

number of tasks the responses of students were recorded but not probed. |

| |

| 8.4

SUBJECT AREA DIFFERENCES |

It

is clear from the above table that the distribution of Halpern thinking

tasks is not even over the different subject areas. It is important

to recognise in interpreting this that the NEMP assessments are not

principally designed to monitor thinking skills. If they do this it

is likely to be as a by-product of other objectives.

Graphs, Tables and Maps, Reading and Speaking, Information Skills,

Social Studies, and Mathematics, which contributed hardly any Halpern

tasks, perhaps tend to be less open-ended than other subjects and

consequently good thinking assessment tasks are less likely to arise

in the usual assessment patterns of these subjects. If thinking is

to be successfully assessed in these areas it seems that specific

questions might be required.

Because no creative thinking tasks fitted the Halpern criteria, the

more creative subjects of Art, Music, Reading and Speaking, and Writing

appear strongly on the table of tasks which involve thinking skills

but less strongly in the Halpern tasks than they might have done.

Science, Technology, and Listening and Viewing covered a wide range

of thinking tasks.

The thinking in Health and Physical Education was principally reflective

although in a number of tasks it seemed that the assessment was mostly

concerned with the student’s opinion rather than the thinking

behind that opinion.

The subject area which stands out most in the table is Art. In the

three assessments undertaken, there were 28 assessment tasks in total,

19 of these were judged to require thinking skills. 10 of these were

creative thinking tasks, not in the one-to-one format and consequently

not included in the Halpern tasks. However, there is little doubt

that the creative thinking of these tasks was evident in the work

which the students produced, even if the thinking was not probed.

Of the other 9 task all were in the Halpern task category. Only logical

thinking was missing. |

| |

| 8.5

TWO EXAMPLES OF VERY GOOD THINKING ASSESSMENT TASKS |

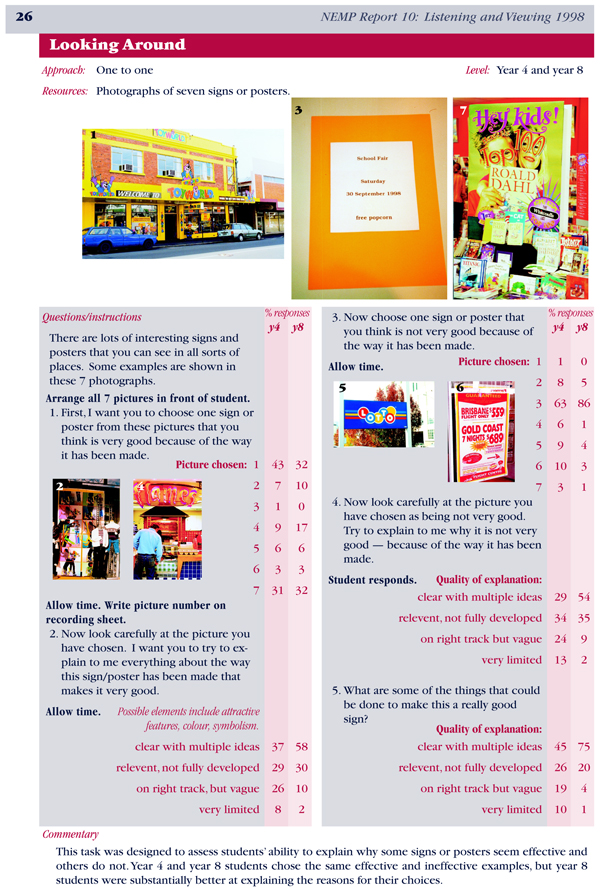

| The

two tasks which follow are, in the researcher’s opinion, examples

of the best thinking assessment tasks in the NEMP assessments. The

first is a critical thinking task taken from the 1998 Listening and

Viewing assessment. |

| |

|

|

| |

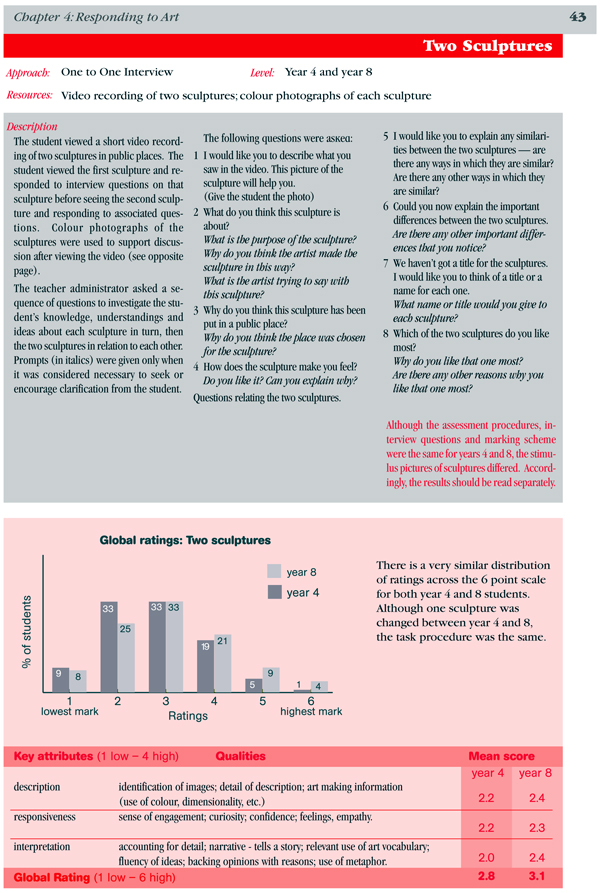

| The

second is a reflective thinking task from the 1995 Art assessment. |

| |

|

|

| |

| |

|

As

explained earlier, none of the creative thinking tasks involved

probing the thinking of students and none of the logical thinking

tasks stood out as being particularly good.

The first task

is clearly evaluative and consequently involves critical thinking.

The initial questions are open-ended, there is no 'correct' answer.

The students are then required to explain and justify their responses

and these explanations are evaluated in the marking criteria.

The second task

was considered to be predominately a reflective thinking task although

there is an evaluative element towards the end. The student is asked

to reflect on the sculptures; what they are about; why they are

there; how the sculptures make the student feel. These are clearly

open-ended questions the responses to which are probed - can you

explain why? The responses to these probes are clearly evaluated

in the marking criteria under the category of responsiveness. |

| |

| 9.

TASKS FOR FUTURE RESEARCH |

| |

The final research question for this study was:

Is it possible to identify particular tasks, presented in a one-to-one

interview format, the video tapes from which would be likely to enable

a researcher, in a subsequent study, to explore the nature of the

thinking which was actually used by students.

It does seems that it is possible. The tasks would need to be Halpern

tasks as discussed in the previous section and this would preclude

the creative thinking tasks. There did not seem to be any obvious

candidates in the logical thinking tasks either. However, the responses

to a number of the critical and reflective thinking tasks did appear

to be worthy of further examination.

The two tasks in section 8.5, for example, would both be suitable.

In both cases students were asked to explain or justify their responses.

The marking criteria then asked the assessors to classify the explanations: |

| |

| |

Task |

Marking

criteria |

|

| |

Looking

around |

Quality

of explanation: |

clear

with multiple ideas

relevant, not fully developed

on right track but vague

very limited |

| |

|

|

| Two

sculptures |

Responsiveness

(how it makes you feel) |

| |

sense of engagement

curiosity

confidence

feelings / empathy

|

| |

| |

slightly |

moderately |

highly

|

| underdeveloped |

developed |

developed |

developed |

|

| |

| A

further examination of the video tapes might enable a researcher to

focus on the nature of the thinking behind the responses as well as

judging their overall quality. There is almost certainly more useful

information in the video tapes than was used in the initial assessment. |